I also think that defining the additional space of constant negotiation with the internal self and needs is important as it makes more realistic the interaction with the environment, if it would be properly modeled.

We assume normal agents have goals, but how they synthesize goals from the plethora of needs to be satisfied hasn't been defined yet. In fact the term goal is not a primitive term, but a constructed one. We have needs, we see circumstances around us, we classify them in order of their possible outcomes and of the needs they can satisfy, and then we define a goal - which is an image, a hybrid between our need and the circumstance that we think is going to satisfy it best.

As an example, we are motivated to go to work because we need to eat, have a roof over our heads, and (but not necessarily) because we need to feel useful and valuable and achieve something. Quite a number of our needs might be tied up into our going to work, but going to work is a circumstance which we chose to satisfy those needs. The same circumstance can act as both a satisfier and a frustrating agent - I might be very satisfied with the pay of my job, so that could cover up my feeding myself and keeping a roof over my head needs quite nicely, but I can feel that because of having to satisfy those needs, I occupy my time with a job that doesn't let me follow my own destination, use my talents, develop my strengths, and focus on my real interests. This is more like the human complexity we encounter than the agent's simplified world, and not because we made the environment more complex, but also because we made the internal scenery of the agent more refined and complex.

The algorithm of chosing what needs to satisfy with maximum utility could therefore be a very complex one, considering the number of choices we can make in our very resourceful environments, and the fact that we can't only compute outcomes for a specific set of choices, without taking into consideration that the environment itself might change. And we are not even discussing dramatic changes. Small changes can make quite a difference.

Let's say you are working in that utterly boring job to get the money to put yourself through uni, but what you can really save up every month is about 10% of your earnings. Also you know how much the uni is gonna cost you, and that it's gonna take you a couple of years to save up for it. In this case, being frustrated and having to take lots of days off from work, or simply spending more on things that you like that are going to make you feel that you still like yourself enough to get the real things that you want, might work against you, and you might end up not putting aside those money, although you are still putting yourself through the frustration of the job. What happens here? The need of doing what you want to do is overwhealming you, and without being aware you are satisfying it in a different way. You promised yourself to stay in that frustrating situation so that you can give yourself the expected reward, but the circumstance frustrates you more than you could think of, and you are really not making much progress towards your goals at all.

That is all because us humans don't have perfect self-control, so we need to always make assumptions on how many internal resources we still have - and that is not only how much energy we have to work before being hungry again, or how many hours before needing to sleep, it has to do with many other psychological components, that are so much harder to compute.

So how do we orient ourselves in this fuzzy environment? At this point, the external environment seems to be creating us much more problems than the external one, which at least is out there, we can look at it, measure it, it seems more objective.

Well, we learn. We learn ourselves in time, and we are even taught about ourselves through learning about other's people experience of dealing with themselves. That is perhaps why we see this blossoming literature on self-help. Science dealt a lot with our problems of getting resources out of the environment, so we are quite enlightened and can think in non-primitive, non-religious terms about getting our food, building our shelters, keeping ourselves safe from the bad weather. But in terms of our dealings with our own selves, we can be quite primitive.

That is why we still look admiratively to many forms of ancient spirituality - they tell us a lot about how other people have dealt with their own selves, and I think not only psychology, but cog sci should be about that as well. Because we don't only have to deal with our beliefs, emotions, needs, but also with our performance, with internal ways of mobilising our resources, our creativity, our intelligence, of understanding our own thought processes. That is why cog sci is only at its beginning, because all these realms are unexplored yet, not in a scientifical way.

If the shaman used to be the institution (grin) assigned with weather control before, and we find that funny now, our grandkids might find it funny that in the past we used to assign self-knowledge and self-discipline to different kinds of spiritual and religious movements. The cognitive scientist and the psychologist might be then the people for the job of helping you explore the resources of your ownintelligence and personality.

Many things could be said about the human goals, and perhaps I am going to say more in a different post. What I want to do now is link all those things to the way an agent has its first experiences, and how that could be modeled more realistically.

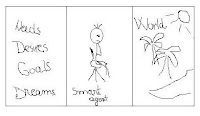

We ourselves come with certain needs to this world, which might be a reflection of our physicality or of our personality (which of course can be physically grounded, but has been studied in conceptual abstract terms, so it makes more sense to us to talk about it in those anyway - more on the cartesian divide in a different post).

So why should agents be different? I think it's ok to pre-program needs in our agents. I just don't think it's ok to program goals.

I think a high dose of realism would be added if an agent would make up his own mind on his goals, and I will soon bring about a little programming example of that. The freedom to decide on your own goals means though a better interface of interacting with your environment, and understanding (gosh, I've used the big U word) what objects of that environment can help or hinder your needs and survival.